Feeding the Fire: Psychology, Engagement, and Algorithmic Media

If you're reading this, and you don't have a Facebook or Xitter account (because you either deleted it, or never had one to begin with), give yourself a pat on the back. This shit is hard. Which is evidenced by the fact that three billion users are still active on Facebook, as an example, in any given month.

The Tl;dr: In This Essay, We will...

Take a look at the vicious cycle of:

- human psychology (rage, heightened emotions),

- content engagement,

- monetization, and

- algorithms

And how this can cause harm to us as individuals and to our society.

We'll end on a brief note on how non-algorithmic alternatives do exist; and why it may still be hard for people to leave the existing platforms.

What's in a Name?

The situation we currently find ourselves in is that, somehow, the billionaire-owned, walled-garden "social networks" are still popular.

A this point, "social media" may be a bit of a misnomer. I like a term that I recently came across in the shownotes of a podcast I enjoy: 1 Let's call them "algorithmic media" instead. I find that this name is a better fit, for a couple of reasons:

- These are platforms that use engagement-optimizing algorithms to determine what content you get (and don't get) to see.

- The raison d'etre of these platforms isn't to facilitate being social, or to facilitate communication between friends and family. They may have started out that way, but these days it's all about pushing content to users, not facilitating interaction between users.

- There are non-algorithmic alternatives - alternatives that put the social back in "social media."

At this moment in time, more so than ever, algorithmic media may not be serving us - their users - anymore: Not serving us as individual humans, not serving us as a society, not serving us in how we govern ourselves politically.

So What's the Problem?

If you follow privacy, digital-sovereignty, or anti-surveillance conversations, none of this will be news to you. But if you’re newer here - welcome! - or sharing this with someone just beginning to explore alternatives to billionaire-owned algorithmic media, here's a quick recap of the lowlights:

- These days, what you're primarily getting to see on [insert billionaire-owned

socialalgorithmic media platform of choice here] is not actually the content you signed up to see - like your friends' posts, or posts by bands you follow - but "promoted" posts and ads. In short, "things that the company's shareholders wished you would want to see," to paraphrase Cory Doctorow. - The goal of these algorithmic platforms is not to show you the stuff you need (or want) to see in as little time as possible; their goal is to keep your eyeballs on the screen for longer than is technically needed, so that you can be served more ads and more tailored content. They are huge time sinks.

- The most engaging content - the kind that we don't just scroll past, but the kind where we linger, pause, rewind, and share the link with our friends - is the kind that enrages us or our community, or is otherwise negative and elicits strong emotions.

- You've probably heard of the "Outrage Machine" - that is a very related concept.

- Algorithms can - and often end up - favouring "emotionally charged, out-group hostile content," as that naturally sees more engagement. This creates a dangerous downward spiral and a self-reinforcing pattern of engagement and rage.

- There is a risk of more problematic content being "recommended" the deeper the recommendation trails go.

- These platforms host horrific content, often through turning a blind eye or through moderation that fails at scale. Grok, as one recent example, produces absolutely awful stuff at scale, on request. Whether vile content like this may or may not explicitly part of the algorithmic business model is neither here nor there.

- Extended social media use, especially "heavy habitual" and "unintentional" use, is linked to poorer mental health, which the people running the platforms may or may not already be aware of. This has been likened to encouraging addictive behaviour.

- Most of the big players are headquartered and hosted in the United States. Given the global political

messcontext we find ourselves in, I am sure I do not need to explain why this may be a problem. - And to top it all off: These platforms track everything you do, whether through ads or through their own trackers. What you do on other websites. What you look at, what you share with your friends, who your friends are. Yes, even if you do not have an account on the platform in question. The list goes on.

- And all that juicy, juicy data doesn't just get stored away somewhere - it gets used to extrapolate who you are, as a person. Information that you did not even openly post about gets triangulated, so that the networks and advertisers can make fairly accurate inferences about your situation and your thoughts in regards to various topics you never posted about - like your demographics, your location, your religious affiliation. Even your mental health, financial situation, or employment stats. And all that juicy information then gets passed on to the highest bidder for "tailored ads."

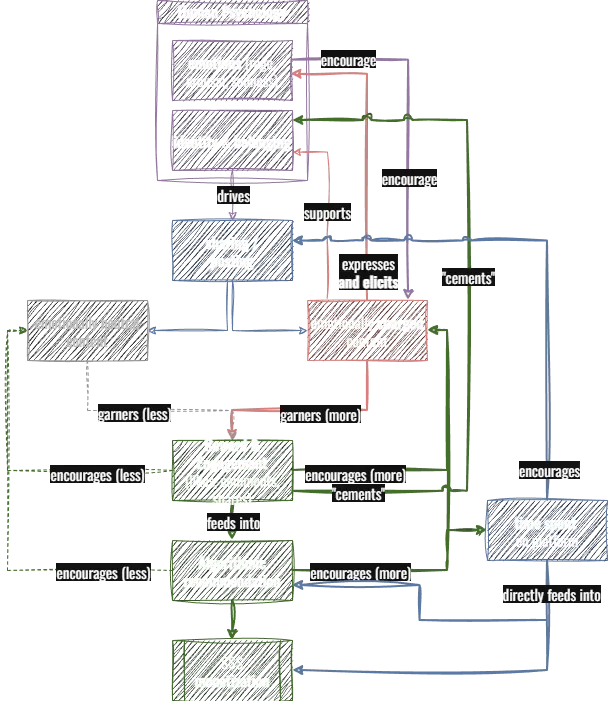

As things stand, many of us thus find ourselves "locked in" to platforms who keep us in a cycle much like this one:

A flowchart visualizing the relationships between human psychology, content sharing, engagement, rewards, and monetization. (Which, in case you can't tell, is not AI generated. Created by hand using draw.io, for better or worse.) I have done my best to provide a detailed verbal description of the flow below.

My attempt at a verbal summary of the above flowchart is:

- Human psychology, especially heightened emotions, but also more nebulous concepts like identity and belonging, drive the sharing and posting of content.2

- That content can be emotionally neutral, or emotionally charged (often evoking and/or evoked by rage, sadness, and/or heightened "arousal" more generally, in the psychological sense).

- The flowchart focuses on the latter type, as that is the kind that feeds more prominently into the engagement loop. This emotionally charged content:

- Expresses the poster's emotions, and elicits emotions in others; and

- Garners reward and engagement (likes, comments, shares).

- The flowchart focuses on the latter type, as that is the kind that feeds more prominently into the engagement loop. This emotionally charged content:

- Engagement and rewards tend to be proportionally greater for emotionally charged content.

- This more "popular" content then frequently receives algorithmic boosts due to being more "popular."

- At the same time, increased engagement & rewards create a feedback loop to the poster, encouraging them to create more such content.

- The more the algorithm "gets it right," the more the users may feel like they are seeing content "relevant" to them, and are encouraged to spend more time on the platform.

- This further encourages sharing & posting, and

- also directly funnels into monetization via ad & promoted content exposure.

- Notably missing: The concept of ad tracking, which I thought of incorporating here, but then figured the flow chart would assume roughly the size of a large boulder the size of a small boulder, and I didn't want to overwhelm either myself nor the readers, so I've left it out for now.

We Just Have to Suck it up, Right? We're Trapped in This Cycle?

Short answer: No.

For a number of reasons, one of the most critical of which is that the algorithmic media platforms themselves did not start out this way. YouTube did not have ads when it launched. Instagram had a non-algorithmic, chronological Most Recent feed until 2016. Xitter didn't show promoted content in people's timelines until 2010. Facebook, according to Cory Doctorow, began as an alternative to MySpace that promised it wouldn't spy on you.

This illustrates two things:

- The enshittification of these platforms is a development that has occurred over time. The algorithmic media were not always thus. This means none of this is a god-given inevitability. What has changed before can change again.

- We are not stuck with what these platforms have become.

- We may need to be the frog sitting in the pot of

warmwarmerslightly hot water, except we'll need to actually notice that we're getting boiled. But once we do that, we're off to the races, as there are alternatives out there. - These alternatives are much, much less likely to be enshittified, as they are not beholden to a billionaire owner and/or shareholders. They work in vastly different ways than the legacy algorithmic media we know and don't love.

- We may need to be the frog sitting in the pot of

Cool! So now we have the Cliff's Notes of why people may be wanting to look beyond these billionaire-owned platforms, and look for alternatives. Alternatives that are more privacy-friendly, that show you the content that you signed up to see, and whose explicit goal is not to keep you glued to the screen for as long as possible and do a number on your mental health along the way. Great news:

The alternatives exist. They are out there. ActivityPub, the Fediverse, Mastodon, Pixelfed, Ghost, many others. They are out there and you can join them today.

- No clue where to start? No problem. Use your favourite search engine (DuckDuckGo, StartPage, Qwant are some great ones to start) to look up the words in the previous paragraph.

- Check out Paris Marx's guide on "Getting off of US Tech", as coincidentally, many/most of the legacy algorithmic media platforms are US giants. Or...

- ... wait for the Alternatives series that'll be forthcoming on this blog.

And yet... Even folks who know all of the above, even folks who agree with all of the above, folks who continue to have shoddy experiences on the algorithmic networks, often cannot seem to shake themselves loose of thos platforms.

Why is that? Because of Network Effects. More on those in the next post!

As always, please feel free to get in touch with thoughts or comments! You can email or find me on Mastodon.

Recent Posts

Dr. Thomas Schwenke in Episode 145 of "Auslegungssache," by c't.↩

Side note: There is a lot more to this, like social goals, negativity biases, etc, but I didn't want to explode the flowchart right on the first node... If you are interested in a more nuanced approach, click some of the reference links in this article and explore from there!↩